Archives

- Home

- Blog

- Posts

- ReimagineReview news

- Reimagining review in a world of preprints

Reimagining review in a world of preprints

- ReimagineReview news

-

Jan 08

- Share post

At this year’s ASCB|EMBO cell biology meeting, we convened researchers, funders, publishers, and advocates in a panel discussion focused on making use of peer review on preprints.

The use of preprints in the biomedical sciences has been increasing exponentially in recent years as it is becoming a common practice in research dissemination.

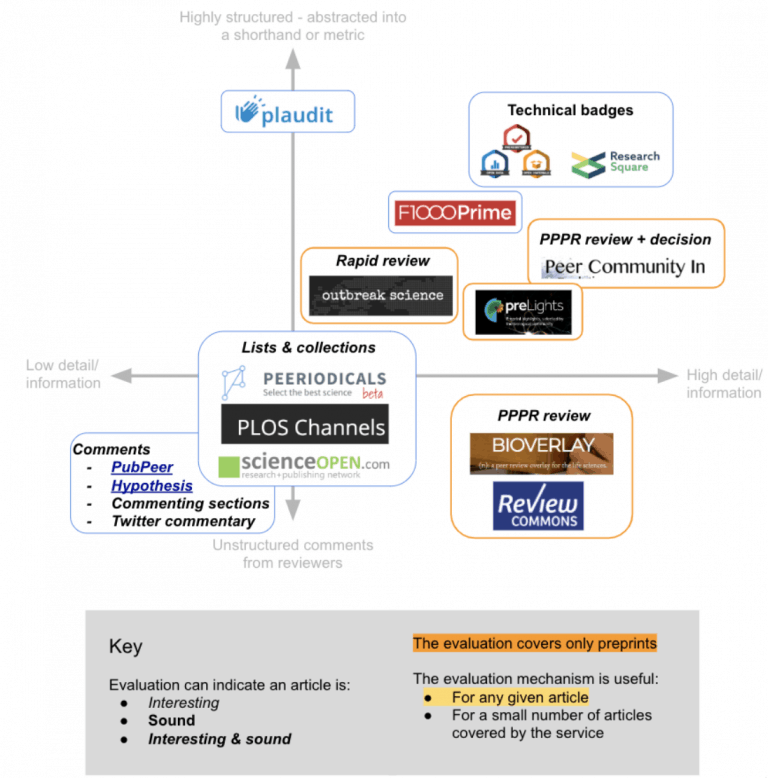

Free from journal brands, preprints enable innovations in peer review at the article level. Because preprints are shared publicly early in the publishing process, authors can receive broader feedback at a point when it is easier to incorporate. Since public commenting is rare, the full potential of preprints is not yet realized. Many third-party projects are already active, and incorporating preprints into existing editorial workflows or conducting journal-independent peer review of preprints.

These projects vary in their goals and in their level and depth of review. To learn more, check the ReimagineReview listings. At the ASCB|EMBO panel, rather than focusing on individual projects, we had a discussion about how these novel review models collectively can be useful in journal or institutional evaluation.

Preprints can synergize with journals

First we heard from Veronique Kiermer (Executive Editor, PLOS) who spoke from a publisher’s perspective. PLOS has been supportive of preprints and published peer review: the publisher encourages authors to post preprints and facilitates their deposition into bioRxiv by performing quality checks of the manuscript, which saves effort from being duplicated at bioRxiv. Just under 20% of submitted manuscripts at PLOS are also preprints (compare this to ~3% of all material appearing on PubMed). As a part of the greater vision of open science at PLOS, authors can choose to publish review reports. 40% of authors opt into publishing review reports on average across all PLOS journals. Reviewers also can associate their reviewing activity with ORCID and choose to sign their reviews. Recently, PLOS announced a new initiative in which PLOS editors are encouraged to supplement organized peer review with community comments on the preprint.

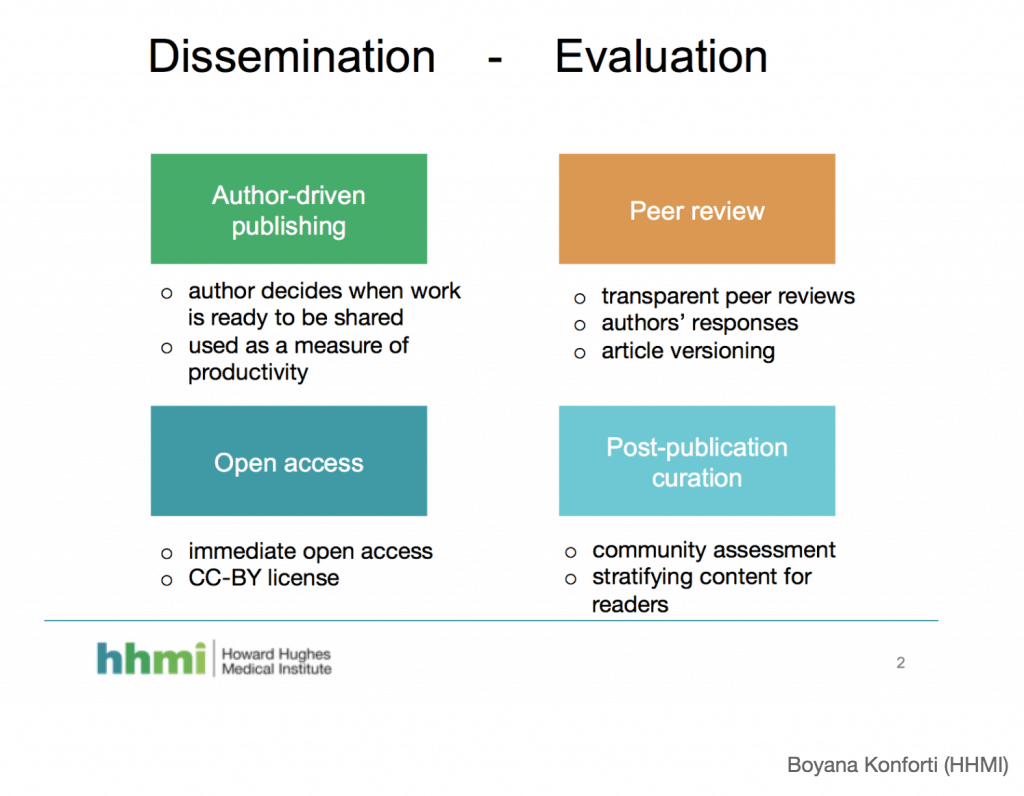

Decoupling dissemination and evaluation of research

Our second speaker, Boyana Konforti (Director, Scientific Strategy & Development, HHMI), shared the funder’s vision of journal-agnostic peer review. At the moment, the outcome of evaluation precedes publishing, and it therefore can preclude public availability of the research. In HHMI’s vision, all primary research articles should be openly available immediately upon publication under a license that allows free re-use of the work, subject only to attribution. Transparent peer review and curation signals would follow publication allowing the entire community to weigh in. The future HHMI envisions is one where article-level curation signals eventually replace journal metrics in research assessment.

Assessing the broader impacts of funding

Next, Jonah Cool (Science Program Officer, CZI) explained how preprints and other scientific outputs help inform the funder’s decision. All CZI funded projects must be deposited as preprints prior to peer review. Acknowledging that many research outcomes are currently unnecessarily shoehorned into the shape of a manuscript, other outputs such as developed technologies and methodologies are considered valuable and important for evaluation. CZI also welcomes scientists to include the feedback on their preprints in grant applications and reports. Supporting open access and preprints is a part of CZI’s commitment to accelerating the pace of science, with priorities on increasing the utility of funded research outcome.

Reviewer contribution should be better appreciated in evaluation

Our fourth speaker, Anna Hatch (Community Manager, DORA), spoke about how credit for peer review could be used to inform the evaluation of scientists. Crediting reviewers is challenging due to traditionally opaque review processes. We should consider looking at quality as well as quantity of peer review, but evaluating the former is challenging when the text of reviews is inaccessible. Currently, many universities and institutes do not have clear statements on how peer review activity will contribute to evaluation. Importantly, we need to remind ourselves that if peer review is considered in scientific evaluation, access to peer review opportunity is not equitable for all researchers.

Useful feedback and increased visibility to discoveries for scientists

Last but not least, we heard Shawn Ferguson (Associate Professor of Cell Biology and Neuroscience, Yale University) describe his transition from being a preprint skeptic to committing to preprint all of his lab’s work.After posting three initial preprints, Shawn appreciated the benefits of sharing discoveries 6-8 months prior to journal publication. During this time he could see usage and readership of the preprints grow to hundreds of downloads. Furthermore, one of the preprints was highlighted and reviewed in preLights, which provided positive feedback and increased visibility. He was given the opportunity to present his preprinted work at a Gordon Research Conference and was included in his evaluation for tenure.

Questions and answers

After hearing from each of the panelists, we moved into a question and answer period. Highlights are summarized below.

Jessica Polka: How is the peer review of preprint and journal submitted manuscript different?

Veronique shared that peer review of preprint can cover smaller parts of the preprint rather than the manuscript as a whole. Granular evaluation enables distinct evaluations of question, conclusion, and specific aspects of the methodology such as code and statistics. Boyana added that peer review of preprints can focus on improving the paper. This uncouples evaluating the quality of the work from its suitability for a journal, which reduces the role of reviewer as gatekeeper. In addition, Shawn noted that peer review of preprints can be an extended discussion of the work with the greater research community.

Richard Sever(bioRxiv, CSHL Press): Should everything be peer reviewed? What fraction between 0-100%?

While panelists declined to give a firm answer, it was clear that many felt that peer review is essential, but not for all manuscripts.

Vivian Siegel(MIT): What is the most important information to evaluate in the peer review?

Veronique answered that peer review should evaluate whether the methodology is appropriate and the conclusions are sound and justified? Boyana remarked that it is important to identify which part of the work is strong, and which parts need further work, noting that these signals of quality can accumulate over time. Peer review should enable the broader community to build on top of the work, added Jonah.

Looking ahead

What became clear from this session is that publishers and funders are actively working towards scientist-driven dissemination and peer review. Peer review of preprints can inform many existing publishing workflows and institutional/grant evaluations. Insights from these new peer review experiments can inform evidence-based modifications to more established review models that will help us improve how scientific discoveries are validated and curated.

The next steps in this transition requires wide-spread awareness and adoption of preprint peer review and commenting in the scientific community. Some simple actions you can take as a researcher to make this a reality are:

- Learn about ReimagineReview listed projects

- Take part in reviewing preprints and published manuscripts

- Submit your manuscript to peer review trials and experiments

- Read and consider peer review of preprints when evaluating an article

Help us continue the discussion with the hashtag #ReimagineReview.

Victoria Yan and Jessica Polka

victoria.yan@asapbio.org